The Ideal of the Music Orchestrator

Everyone loves it when musicians perform music well. Very good musicians naturally interpret the music orchestrator’s wishes and bring a little extra soul to the performance too. Each musician has a lifetime of musical technique, knowledge, practice and devotion to music, all in focus when they play. It comes at a price, however – excellent musicians command high fees, and the additional costs of scoring written parts, scheduling musicians and recording studio time can be significant. If your budget won’t stretch that far, software instruments can provide an excellent alternative.

Software Instruments

Also known as Virtual Instruments (VIs), nowadays these use libraries of sounds recorded from real instruments, far superior to the synthetic keyboard sounds of yesteryear. A music orchestrator experienced in their use can create a convincing performance with a MIDI scoring program or sequencer. I used software instruments for my latest composition Eileen, a piece that has touched many people’s hearts.

Eileen is written for string quartet and piano. Viola, cello & piano are featured instruments so it was important they sounded realistic, otherwise a casual listener might notice they are software instruments, distracting from the emotional impact. The solo violin, viola & cello in Miroslav Philharmonik 2 are very expressive, and the piano has a dark, rich tone that suits the romantic mood of Eileen, so that’s what I used throughout. But good tone is still not enough to give a credible performance. Recall the lifetime’s experience a musician brings when playing – how can that be achieved by a music orchestrator and a MIDI based software instrument? To start with, it’s crucial to understand how the real instrument is played.

Software Strings

For string players, bow speed and pressure, vibrato, note attack and release, phrasing, slides and even breathing are all involved. These can all be emulated in Miroslav Philharmonik 2. The stringed instruments are sampled at various volumes, and automatically react to volume changes in the MIDI orchestration. Several bow techniques are available; in general, “sustain” suited the quieter sections, while “detache” was best in lively sections. Often it is beneficial to change articulations within a single phrase.

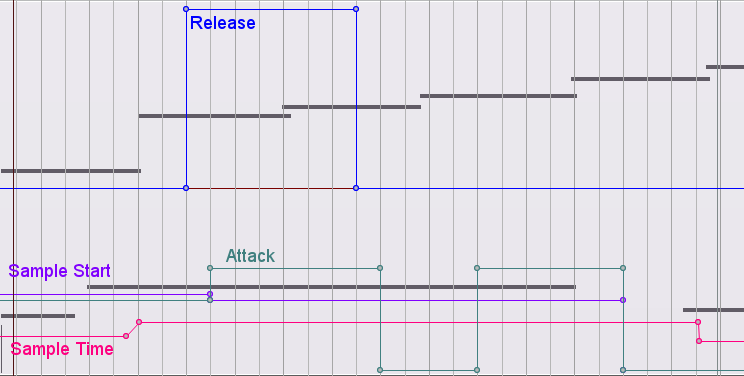

Slurs, where one note blends smoothly into the next, are crucial for realistic string phrasing. This can often be achieved by altering the Attack and Release time of the instrument. Another useful technique is to miss out the first part of the second note using “Sample Start” time. This avoids the start of the bow stroke, whether gentle or loud. The application of these depends on note pitches, length and velocities, and the music orchestrator must adjust them specially for each group of notes. I used automation in my Digital Audio Workstation, Reaper, to control these parameters through the ReaControlMIDI plugin. Here’s a small section of the viola MIDI, an ascending passage of 6 notes at a key point in the piece;

The high notes are played. The lower notes are inaudible keyswitches used to control the bow technique articulation – first is sustain, second is detache, third is sustain. The project is based on a template for Sampletank 3 I’ve uploaded to the Reaper Stash.

- The second and third notes overlap, going some way to creating a slur, although some tweaking of the attack and release of the third note was required to make it really smooth.

- The third an fourth notes do not overlap as I wanted them to sound separately. Attack is set to zero to reinforce this.

- The fourth and fifth notes are slurred in a similar way to the second and third.

- Sample time is used to lengthen notes that are too short, or to speed up or slow down vibrato to suit the tempo and emotional feel.

It’s a lot of work to make only six notes sing, but worthwhile since they are a key part where the effect is heard and appreciated. Doing this for every note would take a long time, so if this detail is required throughout it might be best to use a real musician who’ll give that attention to detail to every note in real time. For those on a tight budget, having the music orchestrator combine real musicians for foreground parts with software instruments for background parts can get a sound close to an entire group of real musicians, but at lower cost.

Real Time

Real musicians don’t play to strict tempo like a computer, their rhythm ebbs and flows with the music, giving it heart, soul, feel or groove. A robotic tempo can send a strong signal to the listener that the music is not “real”. In some genres this is desirable (think Kraftwerk!) but often it is not. Even casual listeners might pick it up subconsciously. The music may sound good on the surface, but feet won’t be tapping, and souls won’t be touched.

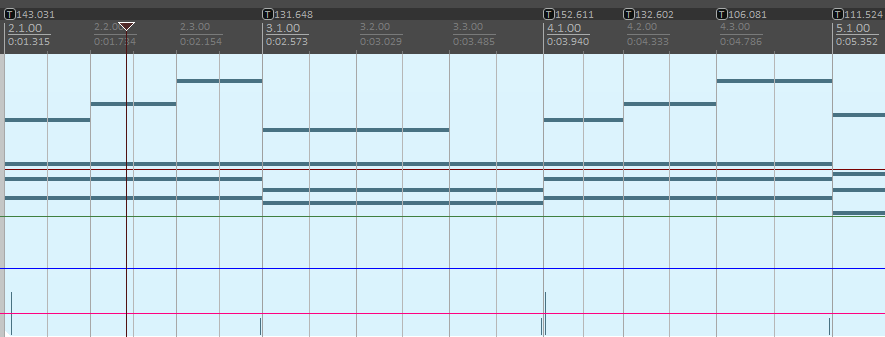

Eileen began as a minute long piece of music I improvised on piano with a gently romantic, free rhythm. I wanted to carry this over to other parts of the music when I developed it into a longer piece. Writing and orchestrating music on a computer is far easier when the notes are aligned to a grid of “bars”, but the default grid is at strict tempo and would have made the music robotic. So instead I aligned the grid to the music. Note the following section of timeline where the tempo markers [T] vary at least once per bar. I aligned them by hand to the piano notes in the MIDI item with the SWS action “Move closest grid line to mouse cursor”. Piano phrases could then be altered or moved anywhere in the piece with ease and still retain a human feel.

You may have noticed that the notes in the viola part were not aligned to the grid. I played these in using my MIDI keyboard, and they sounded good, so left them as recorded. Knowing when to tidy up the rhythm up or leave it alone is an essential quality of a good music orchestrator. A group of real musicians will not all be playing at exactly the same time. The grid should only be aligned to the main rhythmic instrument, in this case the piano.

Ambience (Mis)match

If a music orchestrator is virtually creating a group of musicians playing together in a room, it should sound like a group of musicians playing together in a room! When I sent my initial mix of Eileen to Eddie MacArthur for evaluation, he said it did not as I used reverb on the strings but not piano. This gave the impression they were in different rooms. I remixed using the same Theatre Reverb effect from Miroslav Philharmonik 2 for both instruments, making everything sound far more together.

What’s best for my project?

If you’d prefer a completely real sound, then it’s probably best to use real musicians exclusively. I have a large network of professional musicians and can arrange for them to record in my studio on behalf of clients. With overdubs I can turn one musician into many, so the cost may be less than you anticipate.

A mixture of real musicians and software instruments orchestrated by myself can come close to the sound of 100% real musicians. Some instruments are more convincingly emulated with software instruments than others, and I can advise how to get the best result. This can be a very cost effective way of getting a recording that will sound real to the majority of listeners.

But if your budget won’t stretch to any real musicians at all, take heart, because a beautiful recording like Eileen is still possible using only software instruments.

see comment on Reaper forum…

Thank you Michael, I’ll check that out.